#install.packages("cograph")

#install.packages("Nestimate")

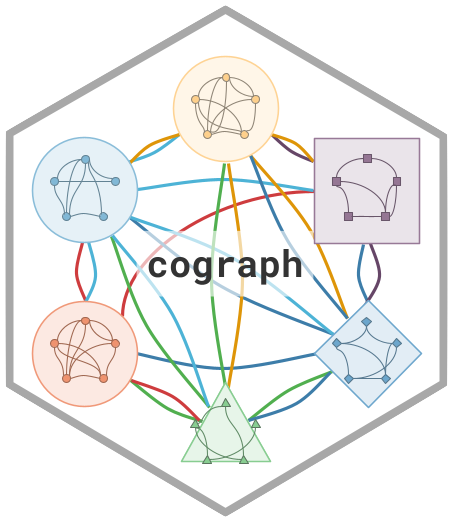

Multi-Cluster Multi-Level Visualization with plot_mcml: A Complete Guide

2026-04-09

Source:vignettes/articles/cograph-tutorial-mcml.qmd

1 Introduction

Large networks with many nodes and multiple constructs, components, or factors have a fundamental problem of being hard to work with. Consider a network with 20 nodes, each representing a construct: motivation, engagement, emotions, and collaboration. This network has 400 possible connections, making it difficult to interpret directly. The common solution is to concatenate these nodes and lose their internal relations, or plot 400 edges and get lost in the details. Multi-Cluster Multi-Level network (MCML, despite the long name) is designed to solve this problem. It works by creating a network within each cluster showing its connections and summarizing all of the inter-cluster connections into a macro-level network. In this way, we preserve the data integrity and the value of the fine-grained details. Think of MCML as a network of networks.

The MCML workflow has a critical analytical advantage: once built, the macro network and each cluster network are full TNA networks in their own right. This means you can compute centrality, run bootstrap stability analysis, permutation tests, and even higher-order pathway analysis (HYPA) on each cluster independently. The MCML is not just a visualization — it is a decomposition of a complex network into interpretable, analyzable sub-networks.

Installation

Both

cographandNestimateare on CRAN. Nestimate handles the MCML computation (build_mcml,as_tna); cograph handles the visualization (plot_mcml,splot).

2 Building the MCML

We use the human_long dataset bundled with Nestimate — 10,796 coded human actions from 429 human-AI programming sessions. The coding scheme has 9 codes grouped into 3 clusters — codes become nodes, clusters become groups.

plot_mcml() models both levels simultaneously in a single figure. A bottom layer shows every node inside its cluster with full edges drawn at the cluster level. A top layer collapses each cluster into a single macro-level node, with edges carrying the aggregated inter-cluster flow. Dashed inter-layer lines connect each node to its cluster’s macro node, making the hierarchical mapping explicit.

data("human_long", package = "Nestimate")

net <- build_network(

human_long,

actor = "session_id", action = "code", time = "timestamp",

method = "relative"

)

clusters <- list(

Directive = c("Specify", "Command", "Request"),

Evaluative = c("Verify", "Frustrate", "Correct", "Refine"),

Metacognitive = c("Interrupt", "Inquire")

)

mcml_obj <- Nestimate::build_mcml(net, clusters, type = "tna")

mcml_objMCML Network

============

Type: tna | Method: sum

Nodes: 9 | Clusters: 3

Transitions: 10270

Macro: 5188 | Per-cluster: 5082

Clusters:

Directive (3): Specify, Command, Request

Evaluative (4): Verify, Frustrate, Correct, Refine

Metacognitive (2): Interrupt, Inquire

Macro (cluster-level) weights:

Directive Evaluative Metacognitive

Directive 0.6056 0.2241 0.1703

Evaluative 0.4774 0.4057 0.1169

Metacognitive 0.4170 0.3275 0.2555- Directive — the human telling the AI what to do (commanding, specifying requirements, requesting)

- Evaluative — the human judging AI output (correcting, expressing frustration, refining, verifying)

- Metacognitive — the human stepping back to think or redirect (inquiring, interrupting)

splot(mcml_obj)2.1 Cluster definitions

Clusters can be defined in three ways. All produce the same result:

Named list (most common):

Data frame with node and group columns:

Extracted from the data when the grouping variable already exists:

Where Do Clusters Come From?

- Theory: Define groups based on domain knowledge, as above.

- Community detection: Use

detect_communities()in cograph to discover structure algorithmically.- External grouping variables: If your nodes have metadata (e.g., department, brain region, country), use that.

2.2 Decomposing into individual networks

as_tna() converts the MCML into individual networks — a macro network and one network per cluster:

Each element is a full TNA network that can be plotted, analyzed, and bootstrapped independently:

splot(grp)3 The Two-Layer Architecture

The plot reads from bottom to top:

3.1 Bottom Layer: The Cluster Networks

The bottom layer shows every node arranged inside its cluster’s elliptical shell. Two types of edges are drawn:

Cluster edges connect nodes inside the same shell. These are the original pairwise transition probabilities — no aggregation. If mat["Command", "Specify"] = 0.303, that exact edge appears inside the Directive shell. Cluster edges are drawn with low opacity (edge_alpha = 0.35) and thin widths by default.

Inter-cluster edges connect one cluster shell to another. These are aggregated from all pairwise edges between the two clusters. The aggregation method ("sum", "mean", or "max") controls how individual edges collapse into one value.

The bottom layer layout is controlled by:

-

spacing: distance from the center to each cluster’s position -

skew_angle: perspective tilt in degrees (0 = top-down, 60 = table-top, 90 = flat line) -

shape_size: radius of each cluster’s elliptical shell -

node_radius_scale: how far from the shell center nodes are placed (0 = center, 1 = edge)

3.2 Top Layer: The Macro Network

The top layer collapses each cluster to a single pie-chart node:

- Colored slice = proportion of total outgoing flow that stays inside the cluster.

- Gray slice = proportion that flows to other clusters.

A mostly-colored pie means the cluster retains most of its flow internally. A mostly-gray pie is an exporter — its nodes transition frequently to other clusters.

Macro edges between pies carry the aggregated inter-cluster weights. Self-loops appear as small arcs when flow remaining inside the cluster exceeds the minimum threshold.

Dashed inter-layer lines connect each node in the bottom layer to its cluster’s pie in the top layer, making the hierarchical mapping explicit.

4 Edge Types

4.1 Cluster Edges

Cluster edges are directed edges connecting nodes inside the same shell. They carry the original pairwise weights — no aggregation takes place. For example, if the transition probability from “Command” to “Specify” is 0.303, that exact value appears as an edge inside the Directive shell.

By default, cluster edges are drawn subtly (edge_alpha = 0.35, thin widths) so they do not overwhelm the between-cluster structure. Increase edge_alpha and widen edge_width_range when the within-cluster dynamics are the focus of your analysis; decrease them when the macro-level flow matters more.

| Parameter | Default | Controls |

|---|---|---|

edge_width_range |

c(0.3, 1.3) |

Line width range |

edge_alpha |

0.35 |

Transparency |

edge_labels |

FALSE |

Show weight labels |

The left panel below uses the defaults. The right panel increases both width and opacity, making within-cluster transitions the visual focus:

4.2 Inter-Cluster Edges

Inter-cluster edges are directed edges connecting one cluster shell to another. Unlike cluster edges, these carry aggregated weights — the individual node-to-node transitions between two clusters are collapsed into a single value using the aggregation method (see Section 3).

By default, arrowheads are hidden (between_arrows = FALSE) because the density of arrows between shells can create visual clutter. Enable them when the directionality of inter-cluster flow is important to communicate. A useful pattern is to dim the within-cluster edges while bolding the between-cluster ones, which shifts the reader’s attention to the macro-level flow structure:

| Parameter | Default | Controls |

|---|---|---|

between_edge_width_range |

c(0.5, 2.0) |

Line width range |

between_edge_alpha |

0.6 |

Transparency |

between_arrows |

FALSE |

Show arrowheads |

par(mfrow = c(1, 2))

plot_mcml(net, clusters, title = "Default inter-cluster")

plot_mcml(net, clusters,

edge_width_range = c(0.2, 0.8), edge_alpha = 0.2,

between_edge_width_range = c(1, 4), between_edge_alpha = 0.8,

between_arrows = TRUE,

title = "Bold inter-cluster + arrows"

)4.3 Macro Edges

Macro edges are directed edges between the pie-chart summary nodes in the top layer. They represent the total aggregated flow between clusters. Because the top layer is a bird’s-eye summary, these edges are drawn with higher default opacity (summary_edge_alpha = 0.7) and arrowheads enabled by default (summary_arrows = TRUE).

Adding summary_edge_labels = TRUE prints the numeric weight on each edge, which is particularly useful in mode = "tna" where the values are transition probabilities. The summary_arrow_size parameter controls how large the arrowheads are — increase it for presentation slides, decrease it for dense networks where arrows overlap:

| Parameter | Default | Controls |

|---|---|---|

summary_edge_width_range |

c(0.5, 2.0) |

Line width range |

summary_edge_alpha |

0.7 |

Transparency |

summary_arrows |

TRUE |

Arrowheads |

summary_edge_labels |

FALSE |

Show weight labels |

4.4 Inter-Layer Lines

Dashed inter-layer lines connect each detail node in the bottom layer to its cluster’s pie-chart node in the top layer. They serve as visual scaffolding, making the hierarchical mapping explicit — the reader can trace which nodes belong to which cluster.

These lines are purely structural and carry no data. Set inter_layer_alpha low (e.g., 0.15) when the scaffolding should recede into the background, or high (e.g., 0.8) when the cluster membership itself is the point of the figure:

5 Edge Labels and Thresholding

5.1 Edge Labels

Edge labels print the numeric weight directly on each edge. There are two independent switches: edge_labels controls within-cluster edges in the bottom layer, and summary_edge_labels controls macro edges in the top layer. You can enable one, both, or neither.

Label appearance is controlled by edge_label_size (bottom layer), summary_edge_label_size (top layer), edge_label_color, and edge_label_digits (decimal places, shared by both layers). For dense networks, keep labels on the macro layer only — within-cluster labels can quickly become unreadable when edges overlap:

plot_mcml(net, clusters,

edge_labels = TRUE, edge_label_size = 0.45,

summary_edge_labels = TRUE, summary_edge_label_size = 0.7,

edge_label_digits = 2,

title = "Labels on all edges"

)When mode = "tna" is set, the weight matrix is row-normalized to transition probabilities (each row sums to 1) and edge labels are automatically enabled on both layers — you do not need to set edge_labels or summary_edge_labels manually. This is the recommended mode when the research question is about sequential transition patterns rather than raw co-occurrence counts:

plot_mcml(net, clusters,

mode = "tna",

title = "TNA mode"

)5.2 Thresholding

The minimum parameter sets an absolute weight threshold: any edge with a weight below this value is hidden from the plot. This is especially useful for dense networks where many near-zero edges create visual noise. The threshold applies to all edge types — within-cluster, between-cluster, and macro — so raising it progressively strips the plot down to its structural backbone.

A good strategy is to start with minimum = 0 to see the full picture, then raise it incrementally (e.g., 0.02, 0.05, 0.10) until only the substantively meaningful transitions remain. In the example below, raising the threshold from 0 to 0.05 removes the weakest transitions and reveals the dominant flow patterns:

6 Node and Shell Styling

6.1 Colors

Each cluster is assigned one color that propagates consistently across every visual element: the shell fill, node fill, within-cluster edges, the pie-chart slice in the top layer, and the legend entry. When colors is NULL (the default), a colorblind-safe palette is generated automatically. To override it, pass a character vector with one color per cluster, in the same order as the cluster list.

Choose colors with sufficient contrast between clusters — avoid hues that are too similar. Muted, low-saturation palettes work well for publication figures, while saturated palettes are more effective for slides and posters:

6.2 Node Shapes

Both layers support four shapes: "circle" (default), "square", "diamond", and "triangle". The node_shape parameter controls detail nodes in the bottom layer, while cluster_shape controls the summary pie-chart nodes in the top layer. Each can be a single value (applied uniformly) or a vector of the same length as the number of nodes or clusters, allowing per-element customization.

Mixing shapes between layers helps the reader distinguish the two levels at a glance. In the example below, detail nodes use diamonds while each cluster’s summary node gets a different shape:

6.3 Sizes

Three size parameters control the main visual elements independently. shape_size sets the radius of each cluster’s elliptical shell in the bottom layer — increase it when nodes feel cramped inside a shell. node_size controls individual detail nodes. summary_size controls the pie-chart summary nodes in the top layer.

Balancing these three sizes determines the visual hierarchy: a large summary_size relative to node_size draws the reader’s eye to the macro level first, while equalizing them gives both levels comparable visual weight:

plot_mcml(net, clusters,

shape_size = 1.6, node_size = 2.5, summary_size = 5,

title = "Larger elements"

)6.4 Shell Styling

Cluster shells are the semi-transparent ellipses in the bottom layer that visually group nodes. Two parameters control their appearance: shell_alpha (fill transparency, 0–1) and shell_border_width (border line width). Higher shell_alpha values create stronger visual grouping but may obscure the edges underneath; lower values keep the shells subtle, letting the network structure dominate. Increasing shell_border_width makes the cluster boundaries more prominent, which helps when shells overlap slightly:

plot_mcml(net, clusters,

shell_alpha = 0.3, shell_border_width = 4,

title = "Bold shells"

)6.5 Node Borders

Fine-tune borders on both layers independently. Detail nodes accept node_border_color; summary pie-chart nodes accept summary_border_color and summary_border_width:

par(mfrow = c(1, 2))

plot_mcml(net, clusters,

node_border_color = "black",

summary_border_color = "black", summary_border_width = 4,

title = "Heavy dark borders"

)

plot_mcml(net, clusters,

colors = c("#E63946", "#457B9D", "#2A9D8F"),

node_border_color = "gray60",

summary_border_color = "gray10", summary_border_width = 3,

title = "Subtle node + bold summary borders"

)6.6 Summary Pie Interpretation

The colored slice of each summary pie chart can represent two different quantities, controlled by summary_pie:

-

"inits"(default) — the cluster’s share of the initial state distribution. Answers: “how often do sequences start in this cluster?” -

"self"— the cluster’s self-retention ratio (self-loop weight / total out-strength). Answers: “how sticky is this cluster — how much flow stays inside?”

par(mfrow = c(1, 2))

plot_mcml(net, clusters,

summary_pie = "inits",

summary_labels = TRUE, summary_label_size = 0.9,

title = "summary_pie = \"inits\""

)

plot_mcml(net, clusters,

summary_pie = "self",

summary_labels = TRUE, summary_label_size = 0.9,

title = "summary_pie = \"self\""

)A mostly-colored pie under "inits" means the cluster is a common starting point. Under "self", it means the cluster retains most of its outgoing flow internally — transitions loop back rather than escaping to other clusters.

6.7 Aggregation Method

When building the macro network from individual edges, the aggregation parameter controls how pairwise weights collapse into cluster-to-cluster values:

-

"sum"(default) — total flow volume. Larger clusters naturally produce higher values. -

"mean"— average flow per node pair. Normalizes for cluster size differences. -

"max"— strongest single edge. Highlights the dominant connection.

par(mfrow = c(1, 3), mar = c(1, 1, 2, 1))

plot_mcml(net, clusters, aggregation = "sum",

summary_edge_labels = TRUE, summary_edge_label_size = 0.7,

title = "aggregation = \"sum\""

)

plot_mcml(net, clusters, aggregation = "mean",

summary_edge_labels = TRUE, summary_edge_label_size = 0.7,

title = "aggregation = \"mean\""

)

plot_mcml(net, clusters, aggregation = "max",

summary_edge_labels = TRUE, summary_edge_label_size = 0.7,

title = "aggregation = \"max\""

)7 Layout Tuning

7.1 Spacing and Perspective

Two parameters control the overall geometry of the bottom layer. spacing sets the distance from the center to each cluster’s position — larger values spread clusters apart, reducing shell overlap at the cost of a wider figure. skew_angle tilts the bottom layer to create a perspective effect: 0 degrees is a top-down view (fully circular), 60 degrees (default) gives a natural table-top perspective, and 90 degrees flattens everything to a horizontal line.

As a rule of thumb, fewer clusters tolerate tighter spacing and steeper tilt, while networks with many clusters need wider spacing and milder tilt to avoid overlap:

7.2 Top-Layer Shape

The summary pie-chart nodes in the top layer are arranged on an oval whose proportions are set by top_layer_scale = c(x_scale, y_scale), expressed as multiples of spacing. The first value controls the horizontal spread; the second controls the vertical spread. A wide, flat oval (e.g., c(1.2, 0.15)) spreads the summary nodes horizontally, which works well when there are many clusters. A tall, narrow oval (e.g., c(0.5, 0.4)) stacks them more vertically, which can be useful when the bottom layer is already wide:

7.3 Inter-Layer Gap

The inter_layer_gap parameter controls the vertical distance between the top of the bottom layer and the bottom of the top layer, expressed as a multiple of spacing. A smaller gap (e.g., 0.3) creates a compact, integrated figure where the two layers feel like parts of one visualization. A larger gap (e.g., 1.2) separates them visually, which can help when edges cross between layers and create clutter. Adjust this together with top_layer_scale to find the right balance:

7.4 Node Radius Scale

Inside each cluster shell, nodes are arranged on a circle whose radius is shape_size * node_radius_scale. The node_radius_scale parameter (0–1) controls how far from the center nodes sit: a low value (e.g., 0.3) packs nodes tightly near the center, while a high value (e.g., 0.75) pushes them outward toward the shell border.

Tight placement keeps nodes compact but may cause label overlap. Spread placement uses the shell space more fully but can place nodes close to the shell edge, where they may interfere with between-cluster edges. A value around 0.5–0.6 works well for most networks:

8 Labels and Legend

8.1 Node Labels

Node labels identify each detail node in the bottom layer. They are enabled by default (show_labels = TRUE). Three parameters control their appearance: label_size adjusts the text size (default auto-scales to 0.6), label_color sets the text color, and label_position controls placement relative to the node (see the next section). For publication figures, switching label_color to black gives sharper contrast against light shells:

plot_mcml(net, clusters,

show_labels = TRUE, label_size = 0.55, label_color = "black",

title = "Styled labels"

)8.2 Label Positioning

By default, node labels appear above each node (label_position = 3). The position follows R’s convention: 1 = below, 2 = left, 3 = above, 4 = right. The same applies to summary labels via summary_label_position:

par(mfrow = c(1, 2))

plot_mcml(net, clusters,

label_position = 1, summary_label_position = 1,

title = "Labels below (position = 1)"

)

plot_mcml(net, clusters,

label_position = 4, summary_label_position = 4,

title = "Labels right (position = 4)"

)8.3 Label Abbreviation

When node names are long or clusters contain many nodes, labels will overlap and become unreadable. The label_abbrev parameter offers three modes: NULL (default, no abbreviation), an integer (truncate all labels to that many characters), or "auto" (adaptively abbreviate based on the total number of nodes — more nodes triggers shorter labels). Truncation adds an ellipsis when a label is shortened. This is often preferable to turning labels off entirely, as it preserves at least partial identification:

8.4 Summary Label Styling

Summary labels (cluster names on pie-chart nodes) have their own color and size controls independent from node labels:

par(mfrow = c(1, 2))

plot_mcml(net, clusters,

summary_labels = TRUE,

summary_label_size = 1.2, summary_label_color = "black",

summary_label_position = 3,

title = "Large labels above"

)

plot_mcml(net, clusters,

summary_labels = TRUE,

summary_label_size = 0.7, summary_label_color = "gray50",

summary_label_position = 1,

title = "Small gray labels below"

)8.5 Legend Styling

The legend maps cluster names to colors. Beyond legend_position, you can control legend_size (text size) and legend_pt_size (symbol size):

par(mfrow = c(1, 2))

plot_mcml(net, clusters,

legend = TRUE, legend_position = "bottom",

legend_size = 1.0, legend_pt_size = 2.0,

title = "Large bottom legend"

)

plot_mcml(net, clusters,

legend = TRUE, legend_position = "left",

legend_size = 0.6, legend_pt_size = 0.8,

title = "Compact left legend"

)8.6 Title and Subtitle Styling

Title and subtitle sizes are controlled by title_size and subtitle_size:

plot_mcml(net, clusters,

title = "Human-AI Interaction Clusters",

title_size = 1.5,

subtitle = "Transition probabilities across coding categories",

subtitle_size = 0.8,

legend = TRUE, legend_position = "bottom"

)8.7 Macro Labels and Legend

Combining summary_labels with legend provides two complementary ways for the reader to map visual elements to cluster identities. Summary labels annotate each pie-chart node directly, while the legend provides a standalone color key. For presentation slides, using both together ensures that the audience can decode the figure regardless of whether they are reading it up close or from the back of a room:

plot_mcml(net, clusters,

summary_labels = TRUE, summary_label_size = 1.0,

legend = TRUE, legend_position = "bottom",

title = "Vibe Coding: Human Interaction Clusters"

)9 Minimal and Maximal Plots

9.1 Minimal: Clean Presentation

A minimal plot strips away all annotation — labels, legends, and arrows — leaving only the network structure itself. This style is useful for graphical abstracts, figure thumbnails, or situations where the plot is accompanied by a separate caption that provides context. Lowering inter_layer_alpha and edge_alpha further softens the scaffolding:

plot_mcml(net, clusters,

show_labels = FALSE, summary_labels = FALSE, legend = FALSE,

summary_arrows = FALSE, inter_layer_alpha = 0.2, edge_alpha = 0.2,

title = "Minimal"

)9.2 Maximal: Full Annotation

At the other extreme, a maximally annotated plot enables every available label and visual cue. This is useful during exploratory analysis when you want to read exact transition values without consulting the underlying data. Combining mode = "tna" (which auto-enables edge labels) with explicit label sizing, a visible legend, and slightly higher shell_alpha and inter_layer_alpha creates a figure that is fully self-contained:

plot_mcml(net, clusters,

mode = "tna",

edge_labels = TRUE, edge_label_size = 0.4,

summary_edge_labels = TRUE, summary_edge_label_size = 0.7,

edge_label_digits = 2,

show_labels = TRUE, label_size = 0.5,

summary_labels = TRUE, summary_label_size = 0.9,

inter_layer_alpha = 0.6, shell_alpha = 0.25,

legend = TRUE, legend_position = "right",

title = "Human-AI Interaction: Full Annotation",

subtitle = "TNA mode with all labels"

)9.3 Publication-Ready Example

A publication-ready figure coordinates all styling parameters into a coherent visual design. The example below demonstrates several key choices: a muted three-color palette with good contrast, mode = "tna" for interpretable transition probabilities, summary_pie = "self" to highlight cluster stickiness, subtle inter-layer lines (inter_layer_alpha = 0.25) that do not compete with the data, and balanced sizing across both layers. The inline comments in the code mark each group of parameters so you can adapt them to your own network:

plot_mcml(net, clusters,

mode = "tna",

colors = c("#264653", "#E76F51", "#2A9D8F"),

# Layout

spacing = 3.5, skew_angle = 55,

shape_size = 1.4, inter_layer_gap = 0.7,

# Shells

shell_alpha = 0.12, shell_border_width = 2.5,

# Detail nodes

node_size = 2.2, node_border_color = "gray40",

# Summary nodes

summary_size = 4.5, summary_pie = "self",

summary_border_color = "gray20", summary_border_width = 3,

# Edges

edge_width_range = c(0.3, 1.5), edge_alpha = 0.3,

between_edge_width_range = c(0.5, 2.5), between_edge_alpha = 0.5,

summary_edge_width_range = c(0.8, 3), summary_edge_alpha = 0.8,

summary_arrows = TRUE, summary_arrow_size = 0.12,

# Labels

show_labels = TRUE, label_size = 0.5, label_color = "gray15",

summary_labels = TRUE, summary_label_size = 0.9,

summary_label_color = "gray10",

edge_labels = TRUE, edge_label_size = 0.4,

summary_edge_labels = TRUE, summary_edge_label_size = 0.65,

edge_label_digits = 2,

# Inter-layer

inter_layer_alpha = 0.25,

# Title & legend

title = "Human-AI Interaction: Cluster Flow Structure",

title_size = 1.3,

subtitle = "TNA mode — self-retention pies, mean aggregation",

subtitle_size = 0.85,

legend = TRUE, legend_position = "right",

legend_size = 0.75

)10 Scaling to Larger Networks

For dense or cluttered networks, suppress labels and raise the threshold to reveal the backbone:

11 Downstream Analysis

The key insight of MCML is that both levels are full TNA networks. The macro network and each cluster network carry their own weight matrices, initial probabilities, and sequence data. This means many Nestimate analysis workflows — centrality, bootstrap, permutation, and higher-order pathway analysis — work on them directly.

11.1 Decomposing to individual networks

as_tna() converts the MCML into a netobject_group:

mcml_obj <- Nestimate::build_mcml(net, clusters, type = "tna")

grp <- Nestimate::as_tna(mcml_obj)

names(grp)[1] "macro" "Directive" "Evaluative" "Metacognitive"11.2 Centrality at each level

centrality() reveals which nodes drive transitions at each level. At the macro level, it identifies which cluster is most central in the inter-cluster flow. At the cluster level, it identifies the categories that drive transitions inside each cluster:

cograph::centrality(grp$macro, measures = c("strength", "betweenness", "closeness", "pagerank")) node strength_all betweenness closeness_all pagerank

1 Directive 1.288812 0 2.535509 0.4041004

2 Evaluative 1.145959 0 2.932623 0.3406137

3 Metacognitive 1.031764 0 3.481484 0.2552859

cograph::centrality(grp$Directive, measures = c("strength", "betweenness", "closeness", "pagerank")) node strength_all betweenness closeness_all pagerank

1 Specify 1.898544 0 2.482693 0.4142881

2 Command 1.297247 1 2.555310 0.3546145

3 Request 1.317157 1 3.777717 0.2310974

cograph::centrality(grp$Evaluative, measures = c("strength", "betweenness", "closeness", "pagerank")) node strength_all betweenness closeness_all pagerank

1 Verify 1.171973 1 2.808572 0.1584953

2 Frustrate 1.699403 0 1.750000 0.3041676

3 Correct 1.475489 0 1.760264 0.2589816

4 Refine 1.491485 0 2.004370 0.278355411.3 Bootstrap stability per cluster

Bootstrap each cluster network independently to assess which transitions are stable:

boot_macro <- Nestimate::bootstrap_network(grp$macro, iter = 500)

boot_dir <- Nestimate::bootstrap_network(grp$Directive, iter = 500)

boot_eval <- Nestimate::bootstrap_network(grp$Evaluative, iter = 500)

boot_meta <- Nestimate::bootstrap_network(grp$Metacognitive, iter = 500)

splot(boot_macro, title = "Macro (inter-cluster)")11.4 Higher-order pathway analysis (HYPA)

Standard TNA models first-order transitions: which state follows the current state. But real behavioral sequences often have memory — the transition from state B depends not just on B but on the path that led to B. HYPA (Hypothesis testing for Path Anomalies) detects these higher-order dependencies by comparing observed pathway frequencies against a multi-hypergeometric null model (LaRock et al., 2020).

build_hypa() identifies pathways that are significantly over-represented (occurring more often than expected under the first-order model) or under-represented:

hypa <- Nestimate::build_hypa(net, k = 3)

plot_simplicial(hypa, dismantled = TRUE)11.5 Plotting individual clusters

Since each cluster is a netobject, all splot() styling works:

12 Comparison: plot_mcml vs. plot_mtna vs. plot_mlna

par(mfrow = c(1, 3), mar = c(1, 1, 2, 1))

plot_mcml(net, clusters, title = "plot_mcml\n(hierarchical)")

plot_mtna(net, clusters, show_labels = TRUE, label_abbrev = 4,

title = "plot_mtna\n(flat clusters)")

plot_mlna(net, clusters, title = "plot_mlna\n(stacked layers)")plot_mcml(): Two-layer hierarchical view. Bottom layer reveals individual dynamics; top layer shows macro structure. Best when you need both levels in a single figure.

plot_mtna(): Single flat layout with nodes inside cluster shapes. No vertical layering. Best for clean cluster maps.

plot_mlna(): Stacked layers in pseudo-3D. Each layer is an independent network level with inter-layer edges. Best for multilevel or multiplex data.

13 Parameter Quick Reference

| Category | Parameter | Default | Description |

|---|---|---|---|

| Input | x |

— | Matrix, netobject, or mcml object |

cluster_list |

NULL |

Named list of node vectors | |

mode |

"weights" |

"weights" or "tna"

|

|

aggregation |

"sum" |

"sum", "mean", "max"

|

|

minimum |

0 |

Edge threshold | |

| Layout | spacing |

3 |

Cluster distance from center |

skew_angle |

60 |

Perspective tilt (0–90) | |

shape_size |

1.2 |

Cluster shell radius | |

top_layer_scale |

c(0.8, 0.25) |

Macro oval c(x, y)

|

|

inter_layer_gap |

0.6 |

Vertical gap multiplier | |

node_radius_scale |

0.55 |

Node spread inside shell (0–1) | |

| Cluster nodes | node_size |

1.8 |

Node size |

node_shape |

"circle" |

"circle", "square", "diamond", "triangle"

|

|

node_border_color |

"gray30" |

Border color | |

| Macro nodes | summary_size |

4 |

Pie chart size |

cluster_shape |

"circle" |

Macro node shape | |

summary_pie |

"inits" |

Pie meaning: "inits" or "self"

|

|

summary_border_color |

"gray20" |

Border color | |

summary_border_width |

2 |

Border line width | |

colors |

auto | Cluster colors | |

| Shells | shell_alpha |

0.15 |

Fill transparency |

shell_border_width |

2 |

Border width | |

| Cluster edges | edge_width_range |

c(0.3, 1.3) |

Width range |

edge_alpha |

0.35 |

Transparency | |

edge_labels |

FALSE |

Show weight labels | |

edge_label_size |

0.5 |

Label text size | |

edge_label_color |

"gray40" |

Label color | |

edge_label_digits |

2 |

Decimal places | |

| Inter-cluster edges | between_edge_width_range |

c(0.5, 2.0) |

Width range |

between_edge_alpha |

0.6 |

Transparency | |

between_arrows |

FALSE |

Arrowheads | |

| Macro edges | summary_edge_width_range |

c(0.5, 2.0) |

Width range |

summary_edge_alpha |

0.7 |

Transparency | |

summary_edge_labels |

FALSE |

Show weight labels | |

summary_edge_label_size |

0.6 |

Label text size | |

summary_arrows |

TRUE |

Arrowheads | |

summary_arrow_size |

0.10 |

Arrowhead size | |

| Inter-layer | inter_layer_alpha |

0.5 |

Dashed line transparency |

| Labels | show_labels |

TRUE |

Show node labels |

label_size |

0.6 |

Node label text size | |

label_color |

"gray20" |

Node label color | |

label_position |

3 |

1=below, 2=left, 3=above, 4=right | |

label_abbrev |

NULL |

NULL, integer, or "auto"

|

|

summary_labels |

TRUE |

Show cluster labels | |

summary_label_size |

0.8 |

Cluster label text size | |

summary_label_color |

"gray20" |

Cluster label color | |

summary_label_position |

3 |

1=below, 2=left, 3=above, 4=right | |

| Title/Legend | title |

NULL |

Title text |

title_size |

1.2 |

Title text size | |

subtitle |

NULL |

Subtitle text | |

subtitle_size |

0.9 |

Subtitle text size | |

legend |

TRUE |

Show legend | |

legend_position |

"right" |

"right", "left", "top", "bottom"

|

|

legend_size |

0.7 |

Legend text size | |

legend_pt_size |

1.2 |

Legend symbol size |

References

LaRock, T., Scholtes, I., & Eliassi-Rad, T. (2020). HYPA: Efficient Detection of Path Anomalies in Time Series Data on Networks. In Proceedings of the 2020 SIAM International Conference on Data Mining (SDM) (pp. 460–468). SIAM.

Saqr, M., López-Pernas, S., Törmänen, T., Kaliisa, R., Misiejuk, K., & Tikka, S. (2025). Transition Network Analysis: A Novel Framework for Modeling, Visualizing, and Identifying the Temporal Patterns of Learners and Learning Processes. In Proceedings of the 15th International Learning Analytics and Knowledge Conference (LAK ’25) (pp. 351–361). ACM. doi:10.1145/3706468.3706513

Tikka, S., López-Pernas, S., & Saqr, M. (2025). tna: An R Package for Transition Network Analysis. Applied Psychological Measurement. doi:10.1177/01466216251348840

R version 4.6.0 (2026-04-24)

Platform: x86_64-pc-linux-gnu

Running under: Ubuntu 24.04.4 LTS

Matrix products: default

BLAS: /usr/lib/x86_64-linux-gnu/openblas-pthread/libblas.so.3

LAPACK: /usr/lib/x86_64-linux-gnu/openblas-pthread/libopenblasp-r0.3.26.so; LAPACK version 3.12.0

locale:

[1] LC_CTYPE=C.UTF-8 LC_NUMERIC=C LC_TIME=C.UTF-8

[4] LC_COLLATE=C.UTF-8 LC_MONETARY=C.UTF-8 LC_MESSAGES=C.UTF-8

[7] LC_PAPER=C.UTF-8 LC_NAME=C LC_ADDRESS=C

[10] LC_TELEPHONE=C LC_MEASUREMENT=C.UTF-8 LC_IDENTIFICATION=C

time zone: UTC

tzcode source: system (glibc)

attached base packages:

[1] stats graphics grDevices utils datasets methods base

other attached packages:

[1] Nestimate_0.4.3 cograph_2.3.6

loaded via a namespace (and not attached):

[1] vctrs_0.7.3 cli_3.6.6 knitr_1.51 rlang_1.2.0

[5] xfun_0.57 generics_0.1.4 S7_0.2.2 glasso_1.11

[9] data.table_1.18.4 jsonlite_2.0.0 labeling_0.4.3 glue_1.8.1

[13] htmltools_0.5.9 gridExtra_2.3 scales_1.4.0 rmarkdown_2.31

[17] grid_4.6.0 evaluate_1.0.5 tibble_3.3.1 fastmap_1.2.0

[21] yaml_2.3.12 lifecycle_1.0.5 compiler_4.6.0 codetools_0.2-20

[25] igraph_2.3.2 dplyr_1.2.1 RColorBrewer_1.1-3 pkgconfig_2.0.3

[29] farver_2.1.2 digest_0.6.39 R6_2.6.1 tidyselect_1.2.1

[33] parallel_4.6.0 pillar_1.11.1 magrittr_2.0.5 withr_3.0.2

[37] tools_4.6.0 gtable_0.3.6 ggplot2_4.0.3